emergent math

Lessons, Commentary, Coaching, and all things mathematics.

Emergent Math

Geoff Krall | geoff@emergentmath.com

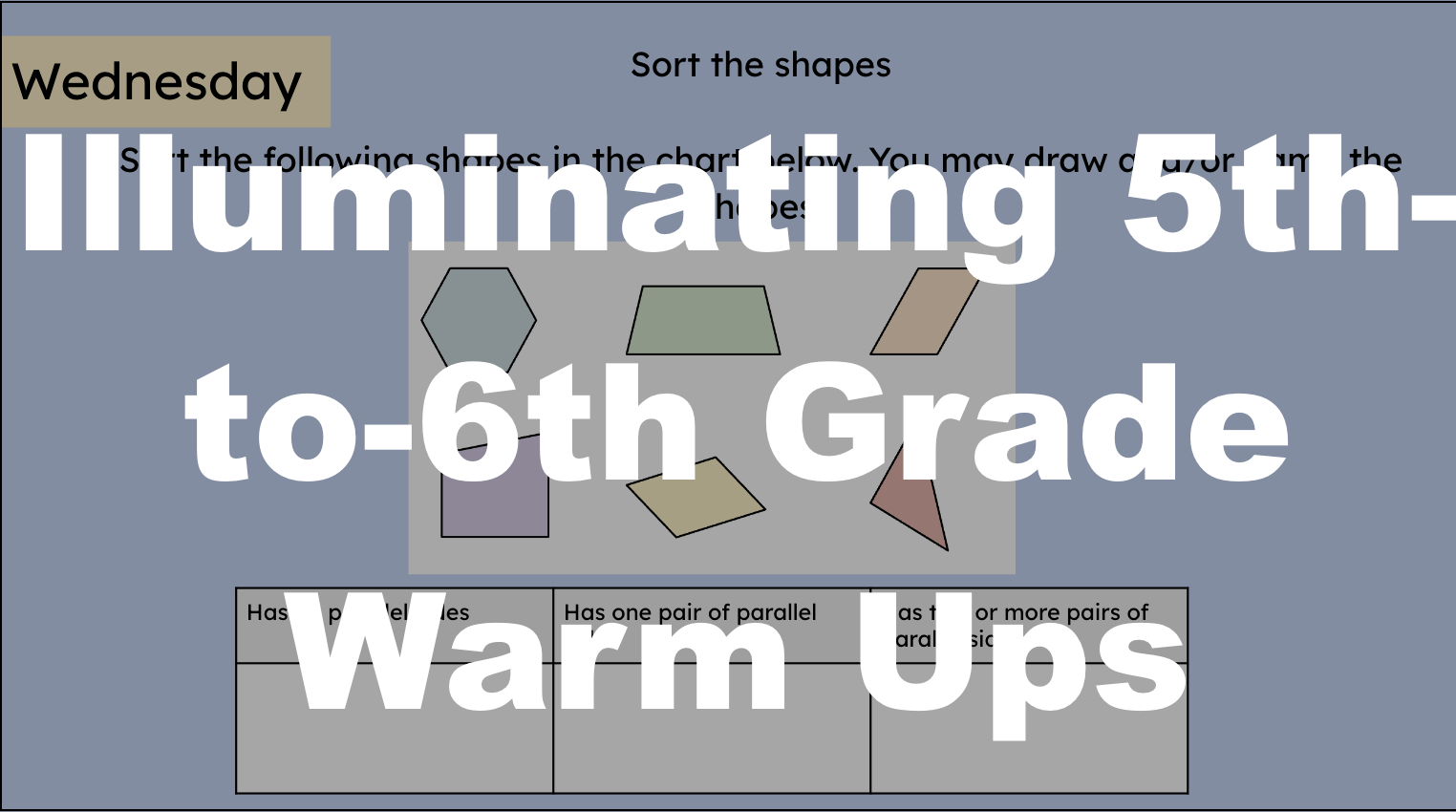

Illuminating 5th-to-6th Grade Warm Ups

Check out 180 complimentary warm ups intended to bridge the gap between 5th and 6th grade!

Algebra Warm Ups for Geometry Teachers

Check out 180 free Algebra warm ups intended to bridge the gap between Algebra 1 and Algebra 2!

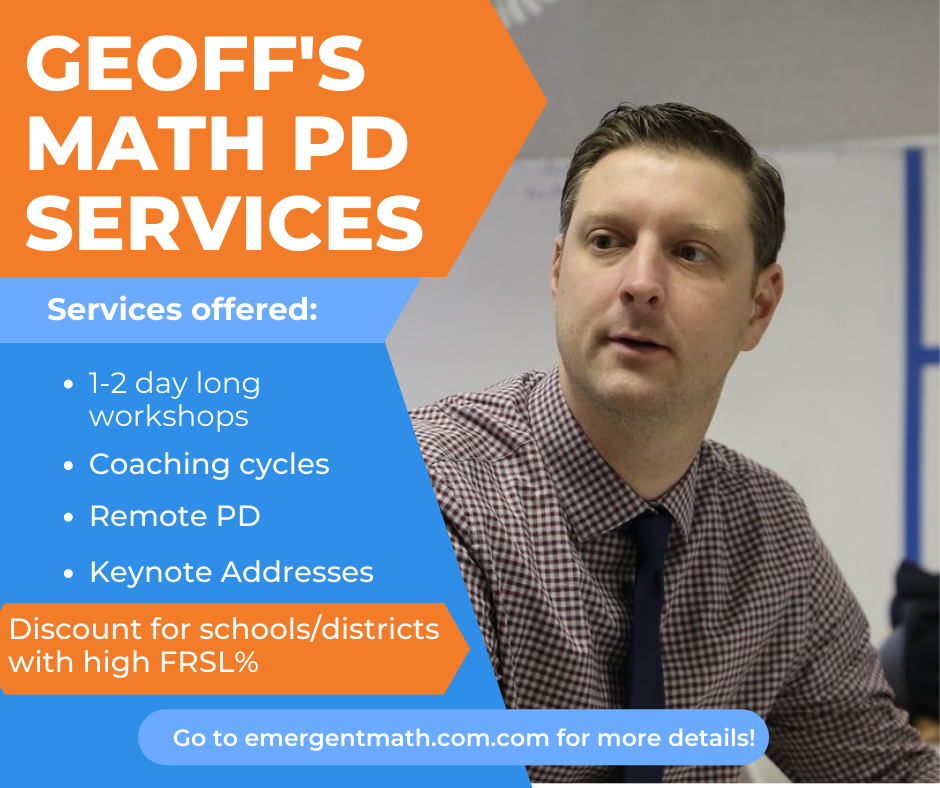

Coaching and PD

Want to learn more about how I can support you in-person or virtually? Check out some of my Coaching, PD, and Workshop ideas.

Geoff’s Longform Math Education Miniseries

I’ve done a lot of longform blogging. I call them “mini-series” where I do a deep dive into a particular aspect of math instruction.

Necessary Conditions

My book about math instruction and accompanying card sets for professional development use.

Feedback from Geoff’s Professional Development

“

“THANK YOU for helping to make teaching math more relevant and helping me feel at ease because I still suck at math but will always continue to learn all I can so as to reach out to more students to help them love math too!”

— Secondary Teacher, Idaho

“

“The resources provided were very helpful and easily applicable! There were several opportunities for participation and conversation that were non-threatening and reflective!”

— MS Teacher, Michigan

From the blog

-

How (not) to get your PhD Part 9: What comes next?

How (not) to get your PhD Part 9 (of 9): What comes next? What opportunities are available once you’ve passed your defense and completed your dissertation? Is there anything about my story that is applicable?

-

How (not) to get your PhD Part 8: Committee… Assemble!: Prelims, Quals, your defense and the moving of mountains

In the penultimate post of this mini-series, I discuss your doctoral committee, prelims, quals, IRB, and your defense. What they are and why getting these things to line up make it seem like a miracle anyone gets their PhD.

-

How (not) to get your PhD Part 7: Let’s Talk About Your Dissertation: the thing that people just don’t want to do

Writing your dissertation may be the most challenging thing you have to do to get your PhD. This post explores what a dissertation actually is and how to ensure you get the thing done.

-

How (not) to get your PhD Part 6: Teach

Here’s something I did right during my time obtaining my PhD in Math Education: teaching. The thing I appreciated most about the UW program was the opportunity to teach several classes in several different modalities. Because I live an hour away from UW I was able to teach on-campus sporadically as both a Temporary Lecturer…

-

How (not) to get your PhD Part 5: Classes, Coursework, and You

It wasn’t until I was in my doctoral studies that I realized how much my day-to-day life was going to be changed by it. I’m starting to think I’m not great at looking ahead. My and my family’s routines and rituals that offer comfort and connection were temporarily scrambled.

-

How (not) to get your PhD Part 4: Do you like to write? You better.

Part 4 of “How (not) to get your PhD”. You’ll be doing a lot of writing in your doctoral program. It would behoove you to like writing, or at least tolerate it.

-

How (not) to get your PhD Part 3: What is a Math Education Doctoral Degree (and what it isn’t)

The information in this post is the biggest “I wish I’d known this…” of this entire blog series. I’m a bit reticent to follow up Part 1 with this post because I don’t want to overly focus on post-graduate job opportunities. Part 9 will also focus on post-graduate opportunities and my goal in Part 1…

-

How (not) to get your PhD Part 2: The right program at the right time at the right cost (if there is one)

Part 3: The right program at the right time at the right cost (if there is one) It actually took me two attempts to obtain a PhD. Don’t be like me on your first attempt. Find the right program at the right time. Oh, and maybe look into how much graduate college costs these days.

-

How (not) to get your PhD Part 1: Why (not) to get your PhD in Mathematics Education

The more inherent you can make your motivation for getting a PhD, the happier you’ll be. In May of 2020, in the midst of a global pandemic, I was laid off from my job at a non-profit due to COVID related “budget concerns.” It is in that context – when the world was crumbling around…