This is Part 2 of a mini-series on a rubric masterclass. Be sure to check out the Intro post and subsequent posts.

We’ve spent the last 5+ years in the math education community advocating for teachers and students to have a growth mindset. We tell students that they can and, in fact, do continue to grow as mathematicians. And yes, our assessments tell a different story. And the scores that students receive on our assessments speak much louder than any neato infographic.

This is a slide I shared in my Shadowcon ‘17 talk on “The Art of Mathematical Anthropology.” The graph demonstrates that students are typically measured against an ever-growing set of standards. And that‘s okay, but it doesn’t communicate growth. In fact, it communicates the false notion that students aren’t growing, even as they are. In this example, the student is learning more mathematics, but their grade is hovering around whatever that orange line is (let’s say, 70); sometimes the grade is slightly below 70, other times slightly above.

It wasn’t until I realized this fact, several years into teaching and even using rubrics, that I became an advocate of using rubrics. Constructing a rubric with this in mind, however, requires short term and long term intentionality. We are going to assess specific content contained within the task as well as the habits and dispositions of mathematicians we want to impart in our young mathematics pupils.

Specific Outcomes and Common Outcomes

We’re going to start using slightly jargony terms here, starting with outcomes. Outcomes are the things we want students to demonstrate. They may be content oriented, such as using an appropriate approach on a task or demonstrating competency on a particular standard. They may also be particular habits you wish to instill in students, such as metacognition or collaboration. One of the issues with numerical grading is that it distills all of these disparate skills into one, singular score. Rubrics have the benefit of getting specific on what students are excelling at and in what areas they need more help, but only if we are careful about how our scores are binned. More on that later.

For now, we’re thinking about what we want our students to do on a particular task. There are two categories of stuff we want students to do or produce in a task. The first category are things that are specific to the task itself. If you pulled the task from a particular section of a curriculum aligned to standards, specific outcomes would align with those standards. Eventually we’ll get even more specific, like, “correctly identified the two roots of the parabola as (-3,0) and (5,0).”

The second category of stuff are things that are not specific to the particular task, but rather things we want to assess over time. These are skills and habits we would like students to improve on that may appear on multiple tasks throughout the year. For example, in my Standards of Mathematical Practice rubric, you might wish to assess SMP 6 (Attend to Precision) over time over multiple tasks. The indicators here are task-agnostic. The language isn’t tied to the specific task, but rather is generalized. These outcomes that are assessed over time are called common outcomes.

Typically I use the SMPs as my common outcomes, but specific school models might have other common outcomes that work as well. For example, my daughter attends a high school that has the following, agreed-upon learning domains:

- Emotional Intelligence and Wellness

- Design Thinking

- Communication, Composition and Reading

- Quantitative and Qualitative Reasoning

- Global Perspectives and Culture

- Leadership, Career, and Service

- Technical and Creative Expression

One could readily construct a rubric that draws upon these common outcomes for classroom use.

Common outcomes are often missing in rubrics I see in schools. That’s a bummer for a couple reasons: 1) common outcomes, to me, are the real value-add of using rubrics. We can measure student growth over time if, and only if, we are using a common standard. Common outcomes articulate in what areas are students growing.

Upon selecting your task, you need to consider what are the specific and common things you wish students to demonstrate. The easiest way to do that is to work the task yourself. Find the best solutions and essential benchmarks along the way. As you’re working, think about what other habits align particularly well with the task. Does the task require the use of multiple representations? If so, you may wish to include a common outcome of connecting mathematics. Does the task require the student to make a case for something? You may wish to include an outcome relating to SMP 3, constructing viable arguments. Does the task facilitate the use of mathematical notation? Is that something you wish to teach to students over time? If so, consider making that an outcome (personally, I’ve aligned that to SMP 6).

At some point, however, you’ll have to stop adding outcomes. Be judicious about how many outcomes are reasonable to assess in a given task. If we overstuff our rubric we will not only burn ourselves out creating and scoring with the rubric, we will also make the rubric meaningless to students. We don’t want to overwhelm students with text and columns. We would rather be focused on a few things at the expense of leaving a few things out. If your rubric spans more than one physical page, it’s probably overstuffed. You could use one-page front-and-back eventually; but early on let’s stick with a single page.

My recommendation is to select 2-3 specific outcomes and 2-3 common outcomes. Early on, I’d stick to 2-and-2.

Let’s do this with our example task. For my common outcomes, it only makes sense to draw from the eight SMPs. I constructed the rubric using a process we’ll get into in the next post. Since I created that rubric ahead of time and I’ll using parts of it regularly, not only does it communicate growth on our common outcomes, it’s also a heckuva time saver during the school year.

Recall from Part 1, that I’m using this task from Illustrative Mathematics. As I work through this task myself, I’m experiencing a bunch of potential SMPs:

MP 1: Make sense of problems and persevere in solving them

MP 2: Reason abstractly and quantitatively

MP 5: Use appropriate tools strategically

MP 6: Attend to precision

MP 7: Look for and make use of structure

MP 8: Look for and express regularity in repeated reasoning

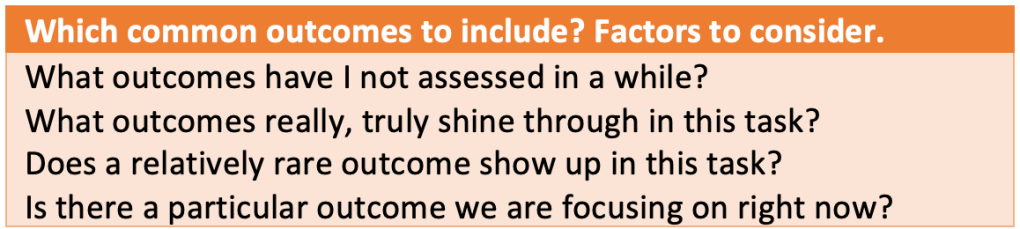

But I don’t want to assess on five different common outcomes. I’d rather assess a couple SMPs on a deep level than a bunch of SMPs quickly. So at this point I need to make a decision about which outcomes to include. I might decide to go with outcomes that I haven’t assessed in a while, or that I simply need more data on. I might select outcomes that are best represented by this problem. I might also choose to use outcomes that I want students to learn and demonstrate.

Personally, one of the reasons I really like this task is that it connects across content: establishing a system with one linear and one non-linear equation. My pre-designed SMP rubric puts that largely in SMP 7 (but also kinda in SMP 8); so I’ll go with SMP 7 for one of my common outcomes.

For my second common outcome, I decided to go with SMP 6: Attend to precision. My reasoning is that this task required a lot of notation and organization for me to solve.

Because this is such a rich and complex task, I could also tack on SMP 1: Make sense of problems and persevere in solving them. This task required a fair amount of persistence and sense-making. However, I’m going to try and keep this rubric to a single faced page, and SMP 1 shows up pretty often in my Portfolio Problems, so I’ll pass on SMP 1 for now. I have other opportunities to collect data on SMP 1.

Now now we identify the specific outcomes. We are asked specifically two questions in this task: the location of Point Q and Point P. As I see it, students need to demonstrate three things to fully answer this task:

- Finding Point Q requires students to have an understanding about how the y-intercept shows up algebraically.

- Finding Point P requires students to first create a linear equation from two points, …

- followed by equating the two equations.

But I don’t feel like including three specific outcomes. I want to include two. So let’s boil this down to the following:

Specific Outcome #1: Demonstrates understanding of the coordinate plane

Specific Outcome #2: Sets up and solves a system of equations

These might seem overly general at first. But remember that we are going to get into specifics via our indicators. Our indicators will be the more fine grained skills and concepts students are going to demonstrate. Because I created my common outcomes rubric (my SMP rubric) at the beginning of the year, I can just copy-paste those outcomes along with their indicators in. Here’s what I have so far: my outcomes and my SMP indicators.

Notice that I’ve created a four-column rubric in which the top two rows are the common outcomes, and the bottom two as-yet incomplete rows will be my specific outcomes. The four categories I’ve chose are emerging, developing, proficient, and advanced. While you can use different terms, it’s important to keep in mind where you’re defining “proficient” and what “proficient” means. We’re going to use this to inform the development of our specific indicators. That’ll come in the next post.

Be sure to subscribe to Emergent Math if you’d like to receive every new post in your inbox. If you’re new here, you can check out my featured posts page or other mini-series (Routines, Lessons, Problems, and Projects & Your Math Syllabus Boot Camp).

Additional posts in this mini-series:

Intro: A Rubric Masterclass

Part 1: Selecting Rubric Worthy Tasks

Part 2: Establishing Common and Specific Outcomes

Part 3: Defining Proficiency and Moving Outward

Part 4: Scores, scoring, grades, and grading

Part 5: Teaching with a rubric; teaching the rubric

Part 6: Humility in Grading